Here's something most agencies won't tell you: we build our own products. Not as passion projects or things we tinker with on slow Fridays. As real, deliberate investments in staying close to the technology we advise clients on. Because there's a meaningful difference between understanding a tool conceptually and having actually shipped something with it.

SLIDEFACTORY has been in operation since 2018, and the experience behind it goes back almost 30 years in the agency space. In that time, the single biggest thing that has separated good advice from great advice is whether the person giving it has skin in the game. So we decided to put some in.

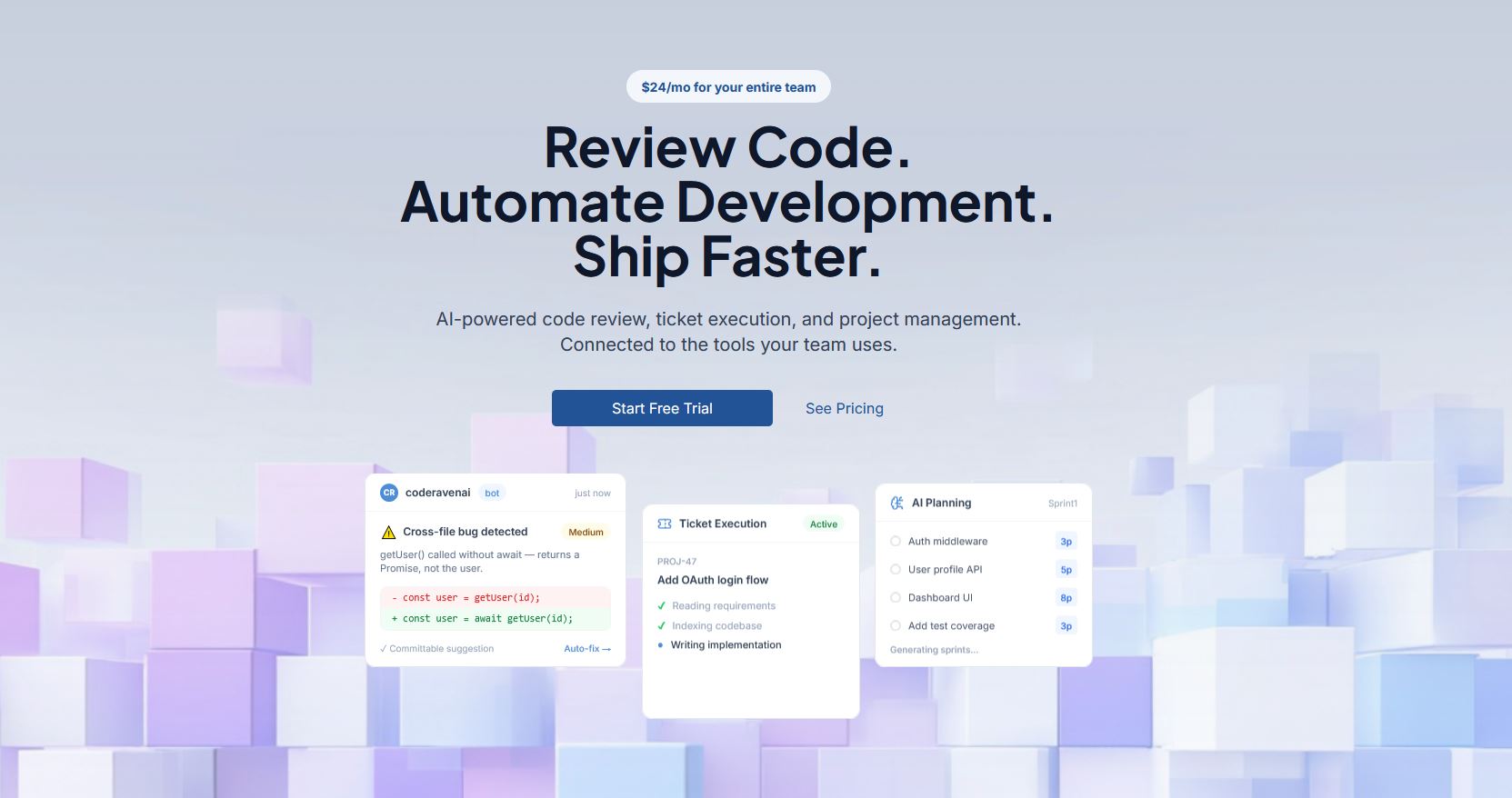

One of the products that came out of that is CodeRaven — an AI-powered development platform built for teams who want to move faster without sacrificing code quality or adding headcount. It started as a code review tool. It's grown into something that can help teams plan work, execute it, and close the loop on low-risk issues automatically. This article is about why we built it, what the process taught us, and who we think should be paying attention to it.

The Code Review Problem Is Hiding in Plain Sight

Talk to almost any engineering team about their review process and you'll hear some version of the same thing: it works fine, mostly, when the right people have bandwidth. That "mostly" and "when" are doing a lot of heavy lifting in that sentence.

Manual code review is expensive in a way that doesn't show up cleanly on a spreadsheet. It costs attention. A senior developer reviewing a pull request isn't just reading code — they're holding the entire architecture in their head, tracking edge cases, and context-switching away from their own work to do it. When that review cycle stretches into the next day, the author has already moved on. The feedback arrives cold, the fix takes longer than it should, and everyone absorbs a small invisible tax that compounds across dozens of PRs a week.

Linters and static analysis tools help at the edges. But they don't answer the questions that actually block a merge: Is this logic sound? Does it introduce performance risk? Is it consistent with how the rest of the codebase approaches this pattern? Those questions require context, and context is exactly what traditional automated tooling doesn't have.

That is the gap we built CodeRaven to close.

What CodeRaven Is (and Is Not)

CodeRaven connects to your repository and provides structured, contextual AI feedback on every pull request. It flags logic issues, identifies security risks, and surfaces the kind of problems that normally come up in a senior developer's first pass through a diff. It understands your codebase's patterns and gives feedback relative to how your team actually writes code, not some generic standard.

It's not a replacement for human review. We want to be clear about that because the framing matters. The goal is to clean up the low-to-mid tier of issues before a human ever opens the PR, so that when they do sit down to review, they're spending their attention on decisions that actually require judgment. The AI takes the first pass. The human takes the last one. That division of labor is where the real time savings come from.

Plans start at $24 per month for the Pro tier and $65 per month for Team, which adds collaboration features and broader context. Both are sized for growing engineering teams, not enterprise procurement cycles.

From Review to Execution: Planning and Building With Repo Context

Code review is where most AI development tools stop. CodeRaven doesn't.

One of the things we built into the platform is the ability to use your actual repository as the foundation for planning work — not just reviewing it. CodeRaven can read your codebase, understand its structure and patterns, and use that context to help you scope tasks, build out a sprint, and then auto-execute that work. It's the difference between an AI tool that reacts to code and one that participates in the development cycle from the beginning.

This matters because most of the friction in software development doesn't live in the code itself. It lives in the planning layer — in the gap between what a ticket says and what actually needs to happen in the codebase to ship it. When the system already understands your repo, that gap gets a lot smaller. Work gets scoped more accurately, implementation stays consistent with existing patterns, and the back-and-forth between planning and execution compresses significantly.

It's still review at its core — the same feedback loop, the same commitment to catching issues before they compound. It's just that the loop now starts earlier and runs further than a traditional code review tool ever could.

Automated PR Remediation: Letting the System Close the Loop

Here's a feature that tends to get people's attention: CodeRaven can automatically fix pull requests, if you want it to.

Not all PRs are equal. Some require genuine human judgment — architectural decisions, product tradeoffs, things where context and experience matter. Others are straightforward: a naming inconsistency, a missing null check, a pattern that doesn't match the rest of the codebase. These low-risk issues still take time. Someone has to catch them, comment on them, wait for the author to fix them, and review again. That cycle adds up.

Automated PR remediation gives teams the option to let CodeRaven handle those fixes directly. When the system identifies an issue it's confident is low-risk, it can apply the correction without pulling a developer back into the loop. The team controls when and how this is enabled — it's opt-in, and the risk threshold is configurable. But for teams that are comfortable with it, it meaningfully shortens the time between "PR submitted" and "PR merged."

It's a natural extension of the review philosophy. If the first pass belongs to the AI and the last pass belongs to the human, automated remediation is what happens in between — the AI not just identifying what needs to change, but actually making the change when the call is clear enough to make.

What We Learned Building It

The most useful part of building CodeRaven wasn't shipping the product. It was what happened during the process of building it.

We used AI tools throughout the entire development lifecycle — not just for writing code, but for architecture decisions, spec drafting, testing strategy, and evaluating model selection tradeoffs. Building a code review tool while relying on AI assistance for our own code created a feedback loop that taught us things we couldn't have gotten from a whitepaper or a vendor conversation.

The clearest lesson: AI-generated code produces a very specific class of problem. The output is clean, often structurally reasonable, and frequently wrong in ways that only become visible with full codebase context. The code looks like it should work. It may even pass basic tests. The issues live in assumptions about data shapes, edge case behavior, and integration patterns that the model simply did not have access to when it generated the output.

That's not an argument against using AI to write code. It's an argument for closing the feedback loop faster. Which is exactly what CodeRaven does. The two ideas point in the same direction.

Why This Feeds Back Into Client Work

The agencies that are going to be genuinely useful to product companies over the next decade aren't the ones that can describe AI tools with confidence in a meeting. They're the ones that have shipped products with them, hit the walls, made the tradeoffs, and developed real judgment from doing the work.

Building CodeRaven put us inside the same development cycle our clients are navigating. We dealt with the same questions: where does AI create velocity and where does it introduce risk? How do you keep quality consistent when output volume goes up? What does a responsible AI-assisted development workflow actually look like day to day?

We built answers into the product. And we bring those answers into client conversations about software development, AI integration, and product strategy. That's the compounding value of building your own things. The experience doesn't stay in the product. It travels.

Who Should Take a Look

If your team is shipping code regularly, reviewing PRs manually, and starting to feel the pressure of that process at scale, CodeRaven is worth a close look. The use case is especially strong for teams that have already adopted AI coding assistants like Cursor or GitHub Copilot and are starting to notice what happens to review quality when code volume increases without a corresponding investment in the review process.

It's also a strong fit for agencies and consultancies delivering code to clients who need a consistent quality gate that doesn't depend on who happens to be available for review that week. Consistency is underrated. The difference between a team that reviews well and one that reviews inconsistently isn't talent. It's process. CodeRaven gives you a floor that holds regardless of who is on rotation.

Start at coderaven.io.

The Bigger Point

We didn't build CodeRaven because we spotted a market gap and moved to fill it. We built it because we wanted to understand, from the inside, what it actually means to develop software when AI is embedded in every layer of the workflow. The market validation was a plus. The experience was the point.

That's how SLIDEFACTORY has operated for the past two-plus years. We've been building with AI-driven development tools since before most agencies were talking about them seriously — not as observers waiting to see where it all landed, but as people who got in early and stayed in. CodeRaven is one of the more tangible outputs of that. The experience we've built along the way is what we bring into every client engagement that touches software development, AI integration, or product strategy.

CodeRaven is one output of that. It won't be the last.